Yesterday Anthropic shipped Claude Opus 4.7. Today they pushed Claude Code 2.1.112 to fix the bug where auto mode kept saying "claude-opus-4-7 is temporarily unavailable."

I've been running on xhigh effort all morning. It's a different tool than Opus 4.6.

If you skim the VentureBeat piece, you'll see Anthropic narrowly retaking the lead for most powerful generally available model. That's technically true. The more useful framing: SWE-bench Verified jumped from 80.8% on 4.6 to 87.6% on 4.7, Claude Code defaulted its new xhigh effort level on across every plan, and Max subscribers now get auto mode on Opus 4.7 out of the box.

Here's what actually changed, what broke (briefly), and whether you should migrate today.

Opus 4.7 in one paragraph

Same price as Opus 4.6 ($5 input / $25 output per million tokens). Context window is still 1M tokens, but it uses a new tokenizer, so roughly 555k English words now fit where 750k did on 4.6. Max output bumped to 128k. Vision accepts 3.75 megapixel images (about 3x the previous limit), which matters for computer use, diagram reading, and pixel-level design reviews. Training data cutoff is January 2026.

One technical change worth flagging: Opus 4.7 swaps "extended thinking" for "adaptive thinking" (the model decides when to reason longer). If you had hard-coded thinking budgets in your API calls, test them.

Benchmarks that actually matter

Anthropic's numbers on agentic coding are the headline:

| Benchmark | Opus 4.6 | Opus 4.7 | Delta |

|---|---|---|---|

| SWE-bench Verified | 80.8% | 87.6% | +6.8pp |

| SWE-bench Pro | 53.4% | 64.3% | +10.9pp |

| CursorBench | 58% | 70% | +12pp |

| Rakuten-SWE-Bench | baseline | 3x resolved | — |

SWE-bench Pro is the harder test — real-world issues from production repos, not curated ones. A 10-point jump there is the number I'd trust for "will this feel better on my codebase?" Vision improvements also matter more than they sound: 3.75MP is enough to read a full-screen IDE capture without Claude guessing at the text.

Anthropic is also upfront that their Mythos Preview model (available through Project Glasswing, invitation-only, defensive cybersecurity focused) outperforms 4.7 on some evals. That's the "narrowly retaking the lead" part — Opus 4.7 is the most capable generally available Claude. Mythos exists for a reason.

The new xhigh effort level

Claude Code now has five effort levels: low, medium, high, xhigh, max. xhigh sits between high and max, giving a finer-grained lever on the reasoning-vs-latency tradeoff.

What changed in practice:

- Claude Code defaults to xhigh on every plan when using Opus 4.7

- Other models fall back to high when you try xhigh (it's Opus-4.7-only)

- You open the slider with

/effort— arrow keys to adjust, Enter to confirm - Auto mode (the classifier that picks effort per turn) is now available to Max subscribers on Opus 4.7 without the

--enable-auto-modeflag

If you're already on Max, you don't have to think about it. The model ramps effort up for hard turns and back down for easy ones. If you're on Pro, xhigh is a reasonable default for everyday coding — save max for the gnarliest agentic runs.

What's in Claude Code 2.1.112 (and the 2.1.111 it patches)

2.1.112 is a one-line patch: fix the "claude-opus-4-7 is temporarily unavailable" error auto mode was throwing. The substance shipped in 2.1.111 the same day:

- Opus 4.7 xhigh available on all plans

- Auto mode for Max subscribers using Opus 4.7 (no flag needed)

/ultrareview— parallel multi-agent code review. Run with no args for the current branch, or/ultrareview <PR#>for a specific PR/less-permission-prompts— scans your transcripts for read-only Bash and MCP calls, then drafts an allowlist for.claude/settings.json- PowerShell tool on Windows (progressive rollout). Opt in with

CLAUDE_CODE_USE_POWERSHELL_TOOL - Read-only bash improvements —

ls *.tsandcd <project-dir> &&no longer trigger permission prompts - Typo suggestions —

claude udpatenow suggestsupdate - Plan file naming — plans are named after the prompt (

fix-auth-race-snug-otter.md) instead of random words /skillssort by token count — presst

The QoL fixes are as important as the headline features. The bash glob-pattern thing alone stops the "yes, you can run ls" prompt from firing 50 times a day.

The past two weeks of Claude Code, compressed

If you've been heads-down shipping, here's what landed between 2.1.101 and 2.1.112:

| Version | Date | What mattered |

|---|---|---|

| 2.1.112 | Apr 16 | Opus 4.7 auto-mode availability fix |

| 2.1.111 | Apr 16 | Opus 4.7 + xhigh + /ultrareview + PowerShell tool |

| 2.1.110 | Apr 15 | /tui fullscreen flicker-free rendering, push notifications tool, /focus separation |

| 2.1.108 | Apr 14 | ENABLE_PROMPT_CACHING_1H for 1-hour cache TTL, /recap, model can invoke built-in slash commands via Skill tool |

| 2.1.105 | Apr 13 | PreCompact hooks can block compaction, plugin monitors for background workers, EnterWorktree path parameter |

| 2.1.101 | Apr 10 | /team-onboarding for teammate ramp-up, OS CA certificate trust by default (enterprise TLS proxies work) |

| 2.1.98 | Apr 9 | Eight Bash tool permission security fixes, Perforce mode, Linux PID namespace sandboxing |

| 2.1.94 | Apr 7 | Default effort raised from medium to high for API-key/Bedrock/Team/Enterprise users |

| 2.1.92 | Apr 4 | /release-notes interactive picker, /cost per-model and cache-hit breakdown, /tag and /vim removed |

| 2.1.89 | Apr 1 | "defer" permission decision for headless sessions, CLAUDE_CODE_NO_FLICKER alt-screen rendering |

Three trend lines stand out:

1. Security hardening. 2.1.98 alone shipped eight Bash tool permission fixes. If you haven't updated, you're running known bypasses. npx claude@latest --version today.

2. Observability and control. /cost breakdowns, /recap session context, PreCompact hooks, and the less-permission-prompts skill are all pointing at the same thing: Claude Code treating the agent loop as something you measure and tune, not a black box.

3. The agent surface is growing. /ultrareview (parallel agents), plugin monitors (background workers), routines (cloud-hosted autonomous runs — more on those here) are all different answers to "how do I run more Claude at once without babysitting it?"

Should you migrate to Opus 4.7 today?

For most of you, yes. Three checks before you flip:

Hard-coded model IDs. If your production code pins claude-opus-4-6, the upgrade isn't free — test your prompts on 4.7. The new tokenizer means token counts will shift slightly, which matters if you're close to context limits.

Extended thinking contracts. If you were relying on the thinking block in Opus 4.6, note that 4.7 uses adaptive thinking instead. Behavior is similar but not identical.

Cost ceilings. Price didn't change, but xhigh effort is more tokens per turn. If your /cost is already spiking, the new default on Claude Code will make it spike harder. Use /effort to step down to high if you need to.

For everything else — agentic workflows, long-context work, computer use, coding at the edge of what Opus 4.6 could handle — 4.7 is the upgrade. The SWE-bench Pro jump is the closest thing to "actually feels smarter on a real codebase" that the benchmark industry has to offer.

If you're running agentic workflows across WotAI content systems, Flow and Echo both target the orchestration layer that a model like this unlocks — Flow automates the workflow scaffolding while you focus on what the agent actually decides.

The bigger picture

Anthropic shipped Opus 4.7 and three Claude Code releases (2.1.110, 2.1.111, 2.1.112) in 48 hours. The pace isn't accidental. Auto mode + xhigh + parallel review + cloud routines is the same feature set from four different angles: the model decides when to think harder, you stop babysitting permission prompts, agents work in parallel, and Claude keeps running after you close your laptop.

The bet is that the next unit of developer productivity isn't "typing fewer characters" — it's "making the agent loop tighter." Opus 4.7 is the model half of that bet. Claude Code 2.1.112 is the runtime half.

The migration cost is roughly zero. The upside is a model that actually finishes more production issues than the one you ran yesterday.

FAQ

What is Claude Opus 4.7?

Claude Opus 4.7 is Anthropic's most capable generally available model, released April 16, 2026. It improves on Opus 4.6 in agentic coding, multistep reasoning, vision, and tool use. SWE-bench Verified jumped from 80.8% to 87.6%, and SWE-bench Pro from 53.4% to 64.3%.

How much does Claude Opus 4.7 cost?

$5 per million input tokens and $25 per million output tokens — the same as Opus 4.6. Priority tier, batch API discounts, and prompt caching are all supported. The Batch API supports up to 300k output tokens via the output-300k-2026-03-24 beta header.

What's the new xhigh effort level in Claude Code?

xhigh sits between high and max on the effort slider, and is only available on Opus 4.7. Claude Code defaults to xhigh for Opus 4.7 across every plan. Open the slider with /effort to adjust. Other models fall back to high when xhigh is selected.

What changed in Claude Code 2.1.112?

2.1.112 is a one-bug patch that fixes "claude-opus-4-7 is temporarily unavailable" errors when running in auto mode. The substance shipped in 2.1.111 the same day — Opus 4.7 support, xhigh effort, auto mode for Max subscribers, /ultrareview, and the PowerShell tool on Windows.

Is Opus 4.7 available on Claude Code auto mode?

Yes, for Max subscribers. Auto mode no longer requires the --enable-auto-mode flag when using Opus 4.7. The classifier picks an effort level per turn instead of running everything at one fixed level.

What's the context window on Opus 4.7?

1M tokens, with a new tokenizer. That translates to roughly 555k English words per context window — slightly less than Opus 4.6 in words, despite the same token budget, because the tokenizer is more efficient on code and structured data. Max output is 128k tokens.

Is Claude Opus 4.7 available on GitHub Copilot?

Yes. GitHub announced Opus 4.7 availability for Copilot Pro+, Business, and Enterprise plans on the same day. Through April 30, 2026 it carries a 7.5x premium request multiplier. Business and Enterprise admins need to enable the Opus 4.7 policy before users can select it.

What's Claude Mythos Preview?

Mythos Preview is a separate research model available through Project Glasswing for defensive cybersecurity workflows. Access is invitation-only. Anthropic disclosed that Mythos outperforms Opus 4.7 on some benchmarks — Opus 4.7 is the most capable generally available Claude, not the most capable Claude overall.

Should I migrate from Claude Opus 4.6 to 4.7?

For most workloads, yes. Price is unchanged, benchmarks are materially better, and vision and agentic use cases see the biggest lifts. Check three things before migrating: hard-coded model IDs in production code, any reliance on the thinking block (now "adaptive thinking"), and token-count assumptions (new tokenizer shifts counts slightly).

Does Claude Opus 4.7 support extended thinking?

Not in the same form as 4.6. Opus 4.7 uses adaptive thinking — the model decides when to reason longer. If your pipeline depended on explicit thinking budgets, retest. Sonnet 4.6 and Haiku 4.5 still support the older extended thinking interface.

Stop building n8n workflows by hand

You've spent the last hour dragging nodes, debugging connections, and Googling expression syntax - for a workflow you could describe in two sentences. Flow generates validated n8n JSON in minutes. Real nodes, real connections.

Free forever plan. No credit card required. Starting at $19/month.

Related Posts

Claude Opus 4.8 and dynamic workflows: what actually shipped

Claude Opus 4.8 brings dynamic workflows to Claude Code and a model that flags its own mistakes. What actually changed, the latest Claude Code version, and what it means for n8n users.

Claude Code's /code-review vs /ce-code-review: when each one wins

Claude Code ships /code-review and /simplify natively. The compound-engineering plugin ships /ce-code-review and /ce-simplify-code on top. They look like duplicates – they aren't. Here's a side-by-side, the quick-review short-circuit you probably missed, and a decision matrix for which to use when.

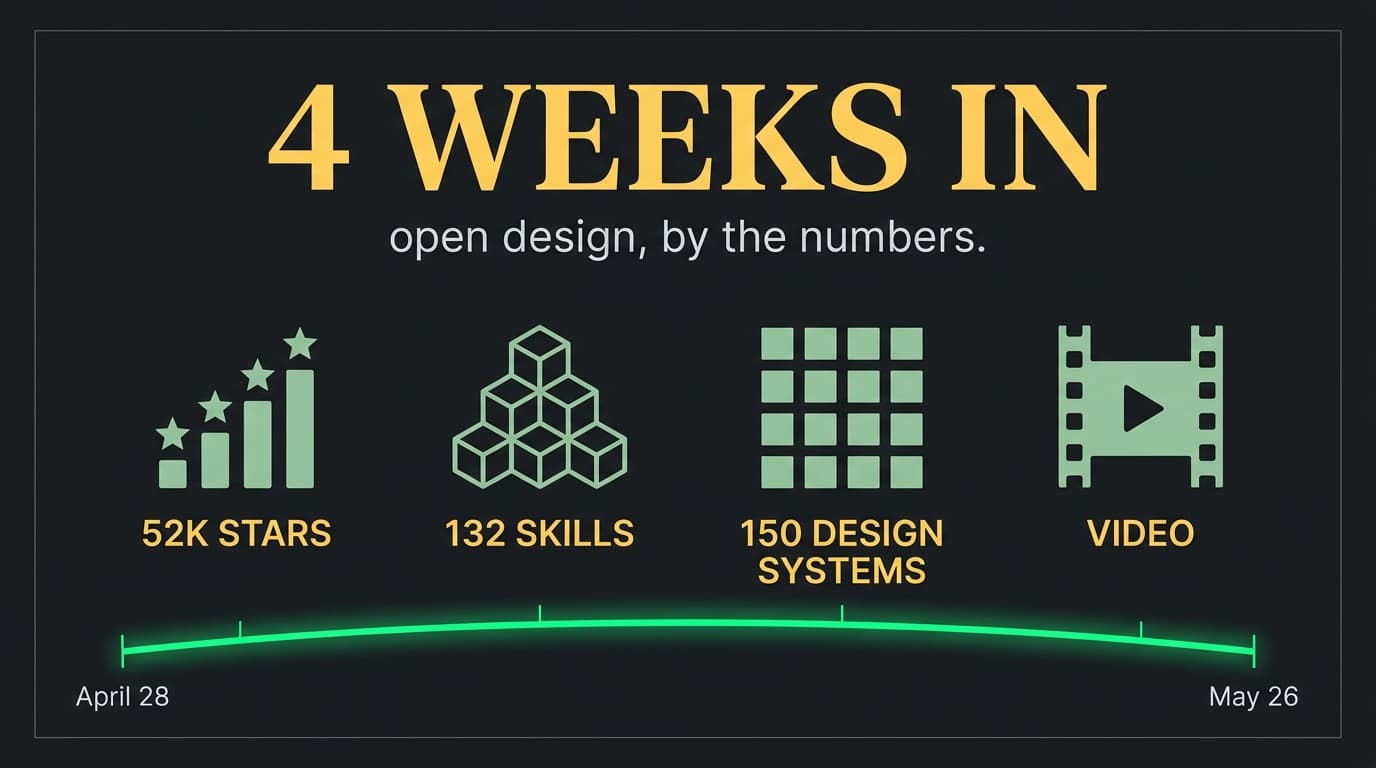

Open Design, 4 weeks in: should an SMB owner touch it yet?

Open Design shipped April 28 with 19 skills and 71 design systems. Four weeks later it's at 132 skills, 150 design systems, 8 stable releases, 52K stars, and now generates video. Here's the SMB go/no-go.