Anthropic's multi-agent code review: what it means for workflow automation

Multi-agent AI just went mainstream. On March 9, Anthropic launched Code Review for Claude Code, a system that deploys multiple specialist AI agents to review pull requests in parallel. It's not a single AI reading your code. It's a team of agents, each examining your PR from a different angle, with an aggregator that ranks and deduplicates their findings.

This matters beyond code review. The architecture Anthropic chose (parallel specialists plus an aggregator) is the same pattern that makes complex workflows reliable. And it validates something we've been building toward at WotAI for years.

How Anthropic's AI code review works

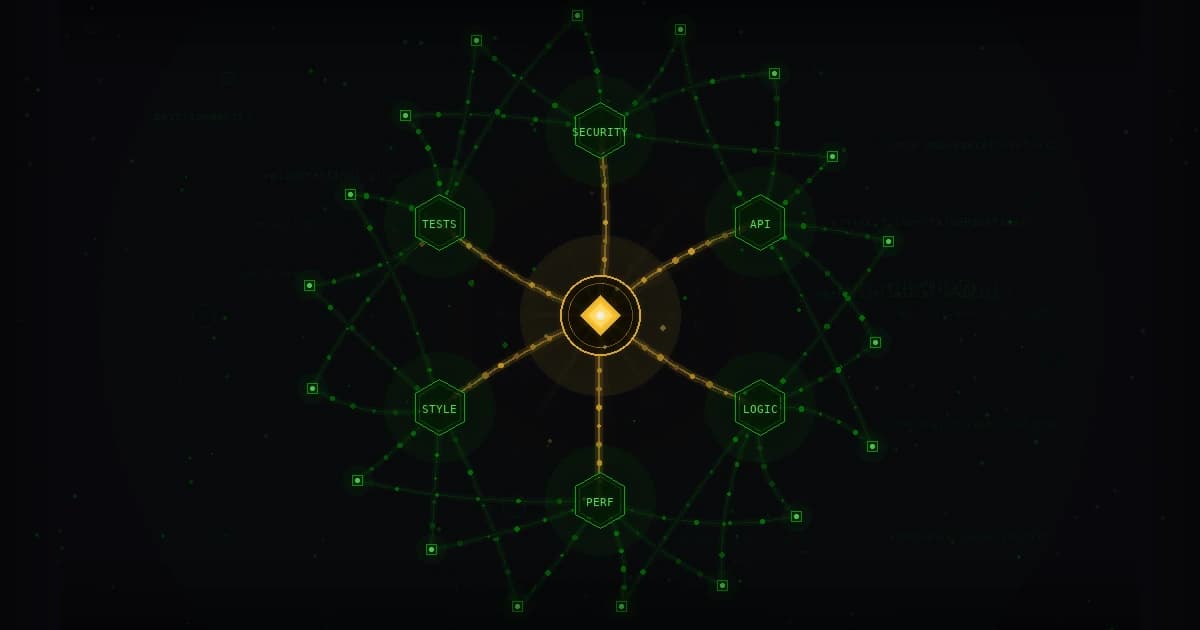

When a PR opens in an enabled repository, the system spins up multiple Claude agents. Each agent specializes in a different dimension of code quality:

- One checks for cross-file implications and broken assumptions

- Another validates parameter handling and state path consistency

- Others look for potential downstream regressions

- Some enforce team-specific review rules too complex for static analyzers

After the agents finish their parallel analysis, a "critic" layer validates every finding. Then an aggregation agent consolidates the results, removes duplicates, and ranks issues by severity before posting comments on GitHub.

The results are striking. In Anthropic's internal data, 54% of pull requests now receive substantive comments, up from 16% with their previous approach. Less than 1% of findings were marked as incorrect by engineers. Each review takes about 20 minutes and costs $15 to $25.

The pattern: parallel specialists, central aggregator

Here's what's interesting. Anthropic didn't build a single, monolithic AI reviewer. They built a team.

This is the same architecture that powers effective automation in every domain: break a complex task into specialized sub-tasks, run them in parallel, then aggregate the results. It works for code review. It works for content pipelines. It works for data processing, customer onboarding, and incident response.

The pattern shows up everywhere this month:

- Cursor Automations (March 5): Always-on AI agents that trigger from Slack messages, Linear issues, merged PRs, or PagerDuty incidents. Each automation spins up a cloud sandbox, follows instructions, and verifies its own output. Cursor runs hundreds of automations per hour.

- Zoom's Agentic AI Platform (March 10): Custom AI agents that orchestrate workflows across meetings, calls, chat, and CRM systems. Users create agents with natural language prompts. No coding required.

- Anthropic Code Review (March 9): The multi-agent PR review system we're discussing here.

Three major platforms, all launching multi-agent systems in the same week. This isn't coincidence. It's convergence.

Why multi-agent beats single-agent for complex work

A single AI agent reviewing a 500-line PR will miss things. It has one perspective, one pass, and limited context. The same is true for a single agent generating content, processing data, or managing a workflow.

Multiple agents working in parallel solve this by:

- Specializing. Each agent focuses on what it does best. Anthropic's AI code review agents don't all look for the same bugs. They examine different dimensions of quality.

- Cross-checking. When multiple agents analyze the same input from different angles, errors get caught. Anthropic's critic layer validates findings before they're posted.

- Scaling. Parallel execution means the system gets faster, not slower, as you add more checks. Anthropic's 20-minute review time covers multiple specialist passes.

This is exactly how production workflows should work. Not one giant prompt hoping for the best, but orchestrated specialists with validation steps.

What this means for workflow builders

If you're building automation with n8n or similar tools, the Anthropic announcement is a signal. The industry is moving from "one AI call does everything" to "multiple AI agents, each with a specific job, coordinated by an orchestrator."

Here's what that looks like in practice:

- Content workflows: Instead of one prompt generating a blog post, use separate agents for research, drafting, SEO optimization, and brand voice review. Then aggregate and review.

- Data processing: Split incoming data across specialized handlers. One validates format, another enriches with external data, a third classifies. Then merge results.

- Customer onboarding: Parallel agents handle email verification, CRM updates, welcome sequences, and access provisioning. An orchestrator tracks completion.

At WotAI, we've built hundreds of these multi-agent workflows for clients over 3+ years as an n8n Certified Expert Partner. The pattern works. And now the biggest names in AI are validating it at scale.

The cost question

Anthropic's AI code review costs $15 to $25 per PR review. That sounds expensive until you compare it to the cost of a human reviewer spending 30 to 60 minutes on the same PR, or worse, the cost of a production bug that slipped through.

The same math applies to any multi-agent workflow. Yes, running multiple agents costs more than a single call. But the accuracy gains and error reduction typically pay for themselves many times over. Anthropic's less-than-1% false positive rate proves the quality case.

What comes next

Multi-agent is the default now. Not an experiment, not a research paper. Anthropic, Cursor, and Zoom are shipping it in production products used by millions.

For workflow builders, this means:

- Design for orchestration. Build workflows that coordinate multiple agents, not single-agent chains.

- Add validation steps. Anthropic's critic layer is the key to their low false positive rate. Every multi-agent workflow needs a verification pass.

- Specialize your agents. Generic prompts produce generic results. Give each agent a specific job with specific context.

WotAI Flow helps you build these kinds of workflows for n8n. Describe what you need, and Flow generates validated workflow JSON with the right nodes and connections. It's how we've been building multi-agent automations for 300+ client workflows, now packaged as a tool anyone can use.

Production-Grade Claude Code in 5 Days

Set up Claude Code the right way - from someone who ships with it daily.

100% satisfaction guarantee. Full refund if you're not happy after the first session.

Related Posts

Anthropic just doubled Claude Code's rate limits – and signed for every GPU in SpaceX's Colossus 1 data center to back it up

Anthropic doubled Claude Code's 5-hour rate limits, removed peak-hours throttling, and signed for SpaceX's Colossus 1 data center – 220,000 NVIDIA GPUs, 300+ MW, online in one month.

Claude Code 2.1.131: the silent 1-hour prompt cache bug, plus 64 changes since 2.1.126

Claude Code 2.1.131 ships today. The buried headline is 2.1.129's silent 1-hour prompt cache TTL downgrading to 5 minutes – plus 64 changes since 2.1.126.

Every WotAI live session is now public on YouTube

The full WotAI Skool live session archive is now public on YouTube – n8n workflows, Claude Code breakdowns, MCP integrations, AI agent architecture, three calls a week.